I’m thrilled to announce that my first single-author paper has just been published in Physical Review E:

Field-induced freezing in the unfrustrated Ising antiferromagnet Adam Iaizzi, Physical Review E 102, 032112 (2020) [paywall] [free PDF] [arXiv]

This paper is a continuation of the theme of my research career, which could be loosely described: “try adding a magnetic field to an antiferromagnet and see if something interesting happens.” In this case, I added a magnetic field to the classical 2D Ising antiferromagnet and studied it with the simplest implementation of Monte Carlo: the Metropolis(-Rosenbluth-Teller) algorithm. At low temperatures I found that simulations never reached the ground state. Instead, they get trapped in local energy minima from which they never escape: frozen states with finite magnetization. There are so many of these frozen states available that you are effectively guaranteed to cross one before you can reach the correct ground state. These frozen states can be described by simple rules based on stable local configurations.

Key points

The model

I’ll quickly summarize the main points of this paper here. We start with the Ising antiferromagnet in 2D with an external magnetic field: a 2D square lattice where each lattice point has a spin, a tiny magnet that can point up or down. These spins want to align in the opposite direction to their neighbors, but the magnetic field (h) wants to point them all in the same direction. The ground state is an alternating pattern of up and down spins in a checkerboard arrangement. This is the simplest model of a magnet that exhibits a phase transition, but we’re going to be interested in the behavior at much lower temperatures.

The method

We’re going to study the Ising model with Metropolis Algorithm Monte Carlo (MC). This means starting with a randomize spin state and updating it using a stochastic process. We pick a spin at random and try to flip it. If that would lower the energy, we always accept it; if it increases the energy we accept it with some probability. Do this repeatedly and eventually you should reach equilibrium, or for T=0, you reach the ground state. For simplicity, let’s focus on zero temperature (absolute zero). For zero-temperature, we only accept the flip if it lowers the energy.

The results

When you turn on the field, it wants to align the spins all in one direction, breaking the checkerboard, but the field must be very strong to do that (h>4). For any h<4, the field doesn’t change the ground state at all, so we would expect the magnetization to be zero. But when we try this in the simulation, we get something totally different.

The magnetization should be zero until h>4, but instead it forms two plateaus. What’s going on here? The procedure described above (starting with a random spin state and making updates) actually corresponds to a quench. The randomized spin state is like a high-temperature state, and starting the MC updates at (T=0) is like dunking hot steel into a bath of ice water.

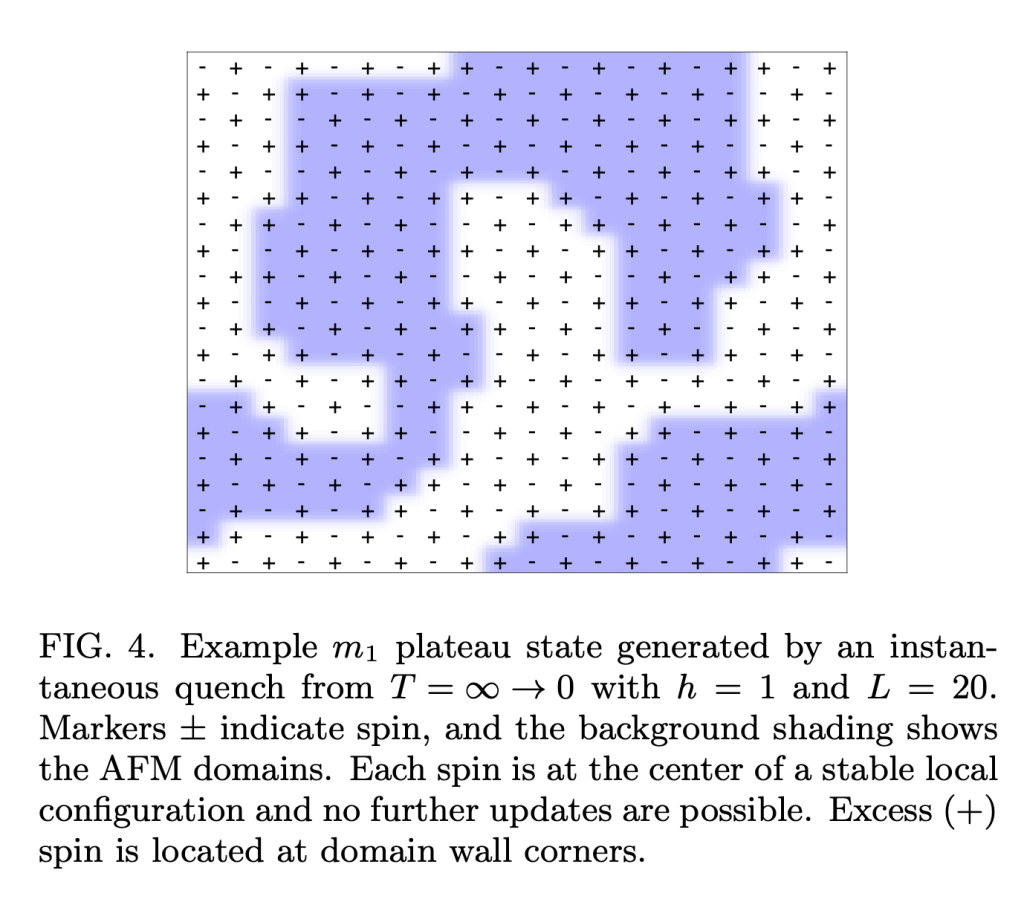

In a quench the system has no time to find the correct ground state; instead it just stops at the first local energy minimum is encounters and it gets stuck there. Quenching is an important process in materials engineering because it can be used to freeze microscopic defects in place, which can actually make materials like metals stronger. For our Ising system, the frozen states look like this:

In this state, every spin is locally stable: i.e. flipping that spin would increase the energy. Since every available move would increase the energy, our algorithm can never escape this state. To lower the energy, you would need to flip many spins at once. It’s the equivalent of water trapped in a mountain lake. The water would like to drain down to the sea, but there is no path out of the lake that doesn’t involve going uphill. Thus, the system becomes stuck in these local energy minima.

Previous studies have found something similar in the Ising ferromagnet (quenching to T=0 with no field). In that case, the correct ground state is only reached 2/3 of the time. The difference here is that there are so many local energy minima, it is almost guaranteed the simulation will get trapped in one before it can reach the ground state.

Simple rules for frozen states

To understand the frozen states, we need to see the system the way the algorithm does. Each attempt to flip a spin only considers the one randomly selected spin and its four nearest neighbors. The only possible outcomes are leaving the spin as it is or flipping it to the opposite direction. If there is a way to lower the energy that requires flipping two or more spins at a time, that move is totally invisible to our algorithm.

Since there are only ever five spins under consideration at a time, we can simply list all the possible initial and final states (see Fig. 3). When we do this, we can find out what local configurations are stable for a given field and then we can construct a stable state out of any combination of these local configurations. The result is a series of simple rules that describe the possible frozen states in each of the two plateaus.

The valleys between the plateaus occur because the system is able to reach the correct ground state at those points. These valleys correspond to cases where at least one pair of possible local states are unstable–they can flip back and forth without changing energy. This allows the system to explore the space until it can find a way to jump down an energy level, a process that continues until the correct ground state is reached.

A long and winding road

This project can trace its roots back quite a long ways. Back to 2017, I was trying to understand an anomaly in my data for an unrelated quantum problem. As a test, I threw together an Ising model MC program and ended up seeing something that looked a lot like Fig. 1 above. On first sight, I knew something must be wrong with my code, because the magnetization shouldn’t form weird plateaus like that, and it definitely shouldn’t have those little dips between the plateaus.

It took a lot of testing and double- and triple-checking to convince myself I was seeing a real effect. Then it was an even longer process of talking to people and reviewing the literature to make sure that someone hadn’t discovered this before. To my surprise, the result was that the effect is real and no one seems to have ever written about it before. In this process I am very grateful to have had the advice of Paul Krapivsky, a colleague from Boston University who is an expert on these matters and who confirmed both that I had found something new and that my analysis was not totally off base.

Try it out yourself!

One of the most fun things about this project is that the problem itself is pretty easy to understand and study. The Ising model is a canonical example studied in any graduate statistical mechanics course, and it’s classical. The code is simple as well: I use the Metropolis algorithm for the Ising model, which is also a canonical example used in a computational physics course. The code is less than 400 lines and I’ve posted it online on Gitlab for anyone to play around with. It’s written in fotran90, an easy language to learn if you’ve done any coding before. On top of all that, you can get started on this problem with just a laptop (a far cry from the thousands of hours of CPU time required for my other work). My publication-quality figures use 512×512 lattices, but this size is overkill. Most of the interesting physics can be understood with much smaller lattices (e.g. 100×100), for which the simulations take only minutes on a modern laptop.

Thanks

I’m the only author listed on the manuscript, but there are many other people whose advice and feedback were of great help in the drafting of this paper. Paul Krapivsky (BU), was a crucial source of advice and feedback from an expert in Ising dynamics. Anders W Sandvik, my PhD advisor and colleague, provided many pointers and recommendations along the way, especially when I was navigating the peer review process on my own. I’m also grateful for the feedback of Wen-Han Kao. Beyond the technical support, my wife, Vanessa Calaban, was a irreplaceable source of both emotional support and proofreading.

Disclaimer: Although this paper was published during my term as a AAAS Science & Technology Policy Fellow, the research and writing were all completed during my time at BU and NTU. None of the statements in this paper or this blog post should be interpreted as a statement on behalf of AAAS or the US DOE.